In digital marketing, we often take analytics data at face value and make game-changing business decisions based on information that, perhaps, should not be given such weight. Before deciding on how to make an investment in your organization, every data point should be given the relevant scrutiny. I’m here to tell you that you should consider developing an atomic understanding of your tool’s data collection and computation methodologies before you start betting the farm. I’m also here to say that even if you do, the environment is about to change dramatically.

Analytics Was Always Flawed

You’ve probably noticed that it is very rare for the data that an individual marketing channel provides (e.g. Facebook Analytics) to have parity with that of your primary analytics package. You may be surprised to find out that different analytics packages (e.g. Google Analytics vs Adobe Analytics) have different measurement methodologies. That is to say, how a session or visit is defined in GA is not the same way it is defined in the analytics program formerly known as Omniture. So, even if you have both installed and configured similarly, you’ll likely see different data.

For SEOs, this is likely why Google Analytics and Google Search Console don’t match as well. My educated guess is that since GSC uses query and click logs while Google Analytics is clickstream, the methodologies for measurement were never likely to match up.

In some cases, clients take measures (no pun) into their own hands and implement a first-party or otherwise self-hosted analytics system. That, too, can introduce additional flaws into the measurement discussion in the same way that building your own CMS can leave you blind to best practices.

No matter what, the data you get from any analytics has never been 100% of what is happening on your site — and it never will be.

How Analytics Works and Why It’s Flawed

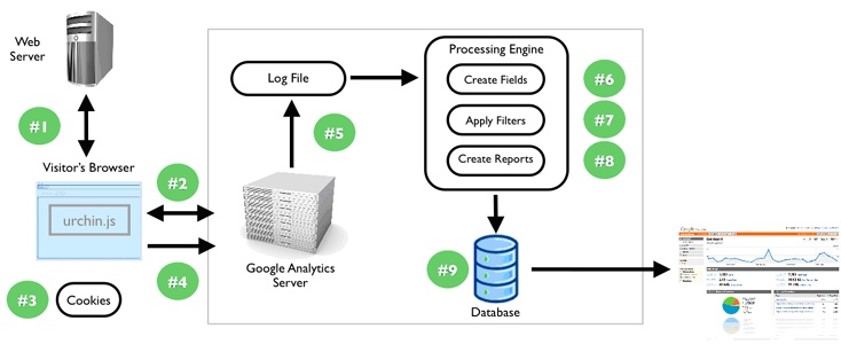

Analytics comes in a variety of forms, but what we’re talking about here is clickstream analytics packages such as GA, AA, et al. These tools operate through the placement of a JavaScript snippet on individual web pages. Once the page loads that JS snippet, it then sends data about the user and the behaviors back to a centralized server. That server stores that information in a database (Urchin, the predecessor to GA used a database in the SQL family) for display, analysis, and export via a fancy interface.

Each tool makes a determination of what a session, a unique user, or a page view is. You determine what is an event and/or conversion. Beyond that, they all basically work the same. Cookies are generally used to determine whether or not a user has been seen before and they act as a storage mechanism. This differs from log file analytics in that it requires JS to execute and browser storage in order to work. If you’re curious, log files are generated at the server level and are a reaction to every access operation triggered by any user agent. Due to the JavaScript dependency on clickstream analytics, they can be disrupted in a variety of purposeful, accidental, and nefarious ways.

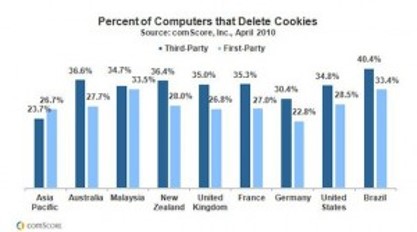

On the nefarious side, it’s actually quite easy to inject incorrect data into GA as a third party. On the other end of the spectrum, there are those users who delete or disable cookies (hello EU regulations!), disable JavaScript, or have ad blockers that may not have previously whitelisted certain analytics platforms. In situations where connections are slow, users may potentially stop the load of the page before analytics fires.

By that token, your analytics has never been reflective of 100% of the users or their behaviors. Some percentage of your “new users” or sessions have always been repeat users or the same session respectively. Some percentage of users have never been captured. And, it’s even possible that someone has been firing data into your analytics install remotely to inflate or deflate your performance. All this is before we even consider the sampling that Google Analytics does if you’re not on the paid version.

And, guess what: It’s all about to get worse.

Let’s Start with ITP

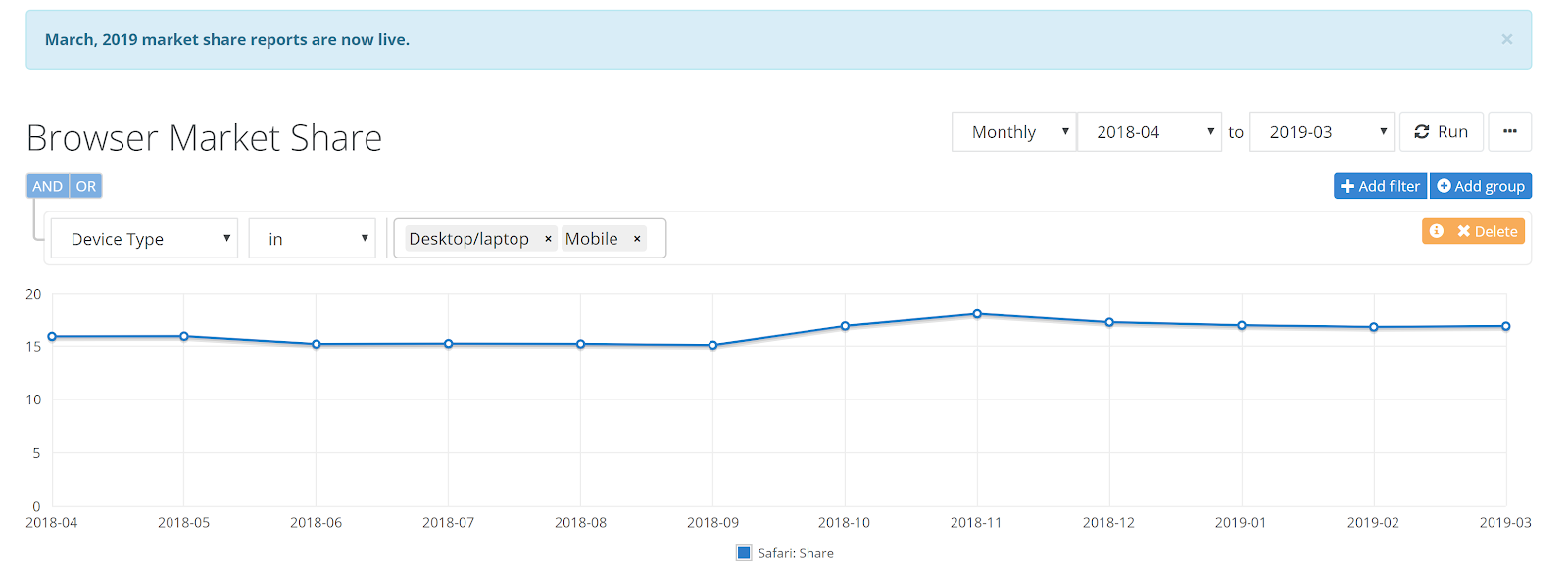

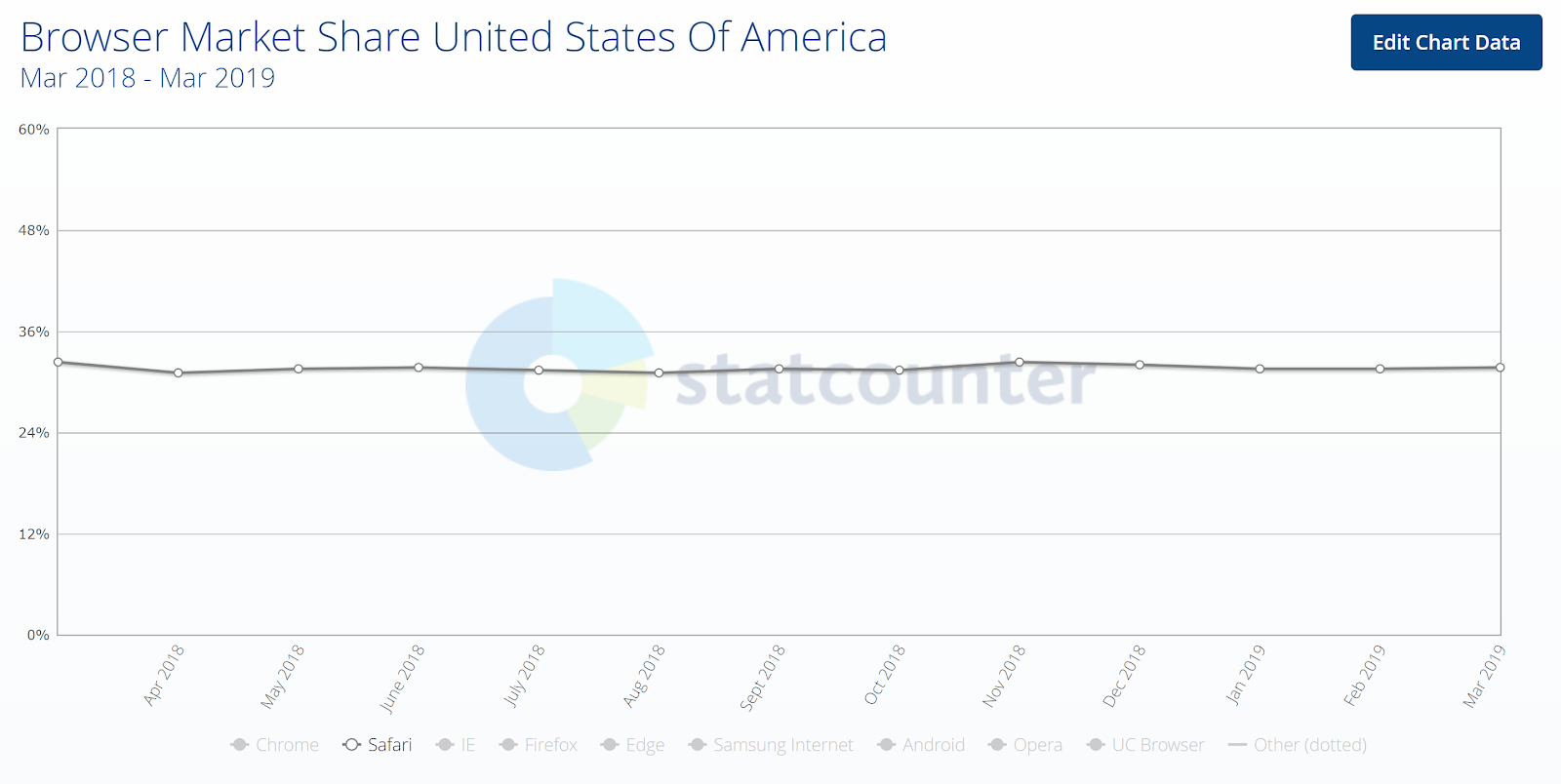

In early 2017, Apple introduced an initiative that they call “Intelligent Tracking Prevention.” Initially, it looked to kill the ability of third parties to track users across multiple sites. As they have introduced subsequent versions, it’s evolved to the elimination of even first-party cookies by limiting their lifespan to seven days. This specifically applies to Safari which has 16.38% of the browser share on mobile and desktop combined according to NetMarketShare.com.

The story looks a bit bleaker from another data source. STATCounter indicates that Safari has a browser share of 31.75% for the United States.

Again, cookies are used to determine whether a user has previously visited and stores information about that user. If cookies have a 7-day expiry, a lot of our performance intelligence goes out the window. The concept of a 90-day look-back window is a wrap. Retargeting beyond 7 days is dead. Effectively, for a sales cycle of any realistic length, the whole concept of attribution outside of last click is over. Oh, and we haven’t even discussed the implications on client-side A/B testing with tools like Optimizely.

Don’t take my word for it, though. The inimitable Simo Ahava has many more cogent thoughts on the subject. It’s a great article that walks through all the current workarounds and I encourage you to read it.

Make no mistake, this will be a problem that reverberates across the web and breaks a lot of things. Depending on the composition of devices your audience uses to access your brand across your media mix, this can yield a big hole in your ability to understand performance.

Savvy marketing technologists will use a combination of browser fingerprinting and IP tracking to get around these new limitations, but, as I discuss below, that is likely a short-term solution. The more definitive solution for tracking would be for users to remain logged in.

A persistent login uniquely positions Google and Facebook to have more “accurate” intelligence than any other players because they capture the largest logged-in user bases. However, I wonder if this will ultimately get Google in hot water because its ability to circumvent the actions of competitors through the course of its ecosystem potentially suggests a monopoly.

Either way, ITP is about to blow a big hole in your analytics and the functionality of your marketing technology in general, especially if many of your users employ Apple devices.

GDPR and Privacy Overlap

For digital marketers, the EU’s General Data Protection Regulation (GDPR) was our version of Y2K. Everyone rushed to become compliant for fear of the fines that the EU is prepared to levy. As a result, many companies and legal departments are fearful of tracking and using data on their users. To some degree, it’s much ado about nothing. (For the avoidance of doubt, I’m not a lawyer and this is not legal advice. Speak to a lawyer before you end up with a big fine).

I recently had an eye-opening conversation with a very smart German gentleman who explained to me that the GDPR isn’t meant to be a mechanism to prevent data collection. Rather, it’s meant to be a mechanism to discourage people from tracking any and everything unjustifiably. I was surprised that he was working on a project that requires a lot of tracking and wasn’t afraid of the idea of collecting a ton of implicit data points.

If you are adhering to the GDPR standard, users must have the ability to delete their data and data about themselves from your systems. Inherently, that introduces potential black holes in your data if any meaningful amount of people wants to delete themselves from your stores.

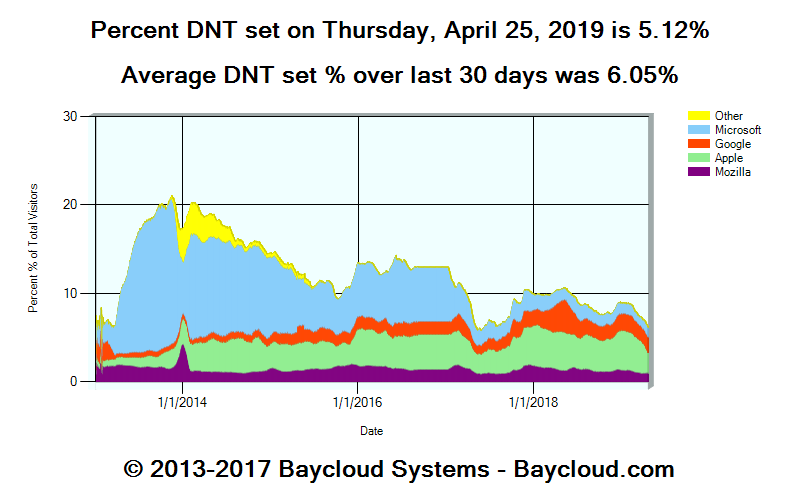

These overzealous (and educated) users probably sit in the middle of the Venn diagram of people that use Tor, DO NOT TRACK, and wear tin foil hats with their insatiable need for privacy. I suspect that these are the types of users that disable JavaScript unless they absolutely need it and use the Google Analytics opt-out browser extension.

These users are certainly a potentially existential threat to your clickstream intelligence, but perhaps not as big as other threats.

Firefox is Firing on Browser Fingerprinting & More

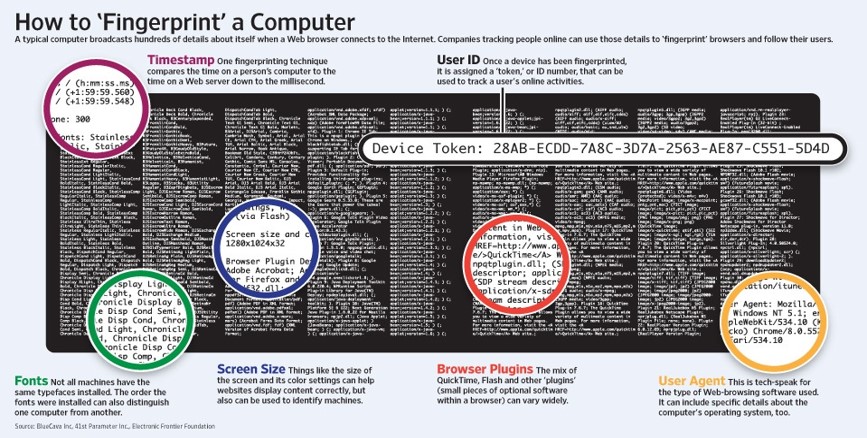

If you attended or watched my 2014 MozCon talk on Digital Body Language, I talked about the concept of browser fingerprinting. The short explanation is that you can use a series of implicit signals being broadcast by a given user’s browser to uniquely identify them. Some of the elements that comprise a browser fingerprint include screen resolution, installed fonts, user agent, operating system, the offset of your computer’s clock to internet time, and so on.

As an example, this is your browser fingerprint:

[fingerprint]

Here are the features that comprise it:

[fingerprint-components]

When combined with an IP address it becomes a highly accurate set of values to uniquely identify a user. In fact, a lot of AdTech use this technique in lieu of cookies. So, you can see that there is a world where browser fingerprints could replace cookies.

The anonymous browsing protocol Tor implemented a solution to thwart browser fingerprinting called “Letterboxing.” Effectively, this produces incorrect information about the viewport when you attempt to generate a fingerprint thereby making the technique inconsistent and ineffective.

But, most people aren’t using Tor to browse the web though, so who cares?

Well, Firefox has incorporated Letterboxing into its latest version.

Further still, Firefox has introduced Tracking Protection which blocks third-party tracking when the user is in private mode and there are rumblings of Firefox limiting cookies to a 7-day window as well.

With Letterboxing and Tracking Protection, the user has to enable these features, but just like Google’s Secure Search, it’s not hard to make a feature the default once it’s in the wild.

What about Chrome?

As of this writing, Chrome still dominates the browser market share, so you may want to reconsider if you were thinking “I can live with a 15-40%” hole in my data.

From the outside looking in, Google needs to track all the things, but they also seem to want to be a good steward of the web. With the US legislature inching toward data protection regulation and the Internet community pushing for privacy-focused functionality, I suspect that Chrome will be compelled to comply.

In fact, Chrome may need to be a bit more proactive about its approach since it recently came under fire for automatically logging users into Google accounts.

However, what is potentially even more earth-shattering to the Paid Media community is the upcoming addition of the Blink LazyLoad feature. From the specification’s summary alone, marketing managers around the world can hear the sound of their ad impressions going down or being fraudulently inflated (emphasis mine):

“Web pages often have images or embedded content like ads out of view near the bottom of the page, and users don’t always end up scrolling all the way down. LazyLoad is a Chrome optimization that supports deferring the load of below-the-fold images and iframes on the page until the user scrolls near them, in order to reduce data usage, speed up page loads, and reduce memory use.”

The project owners stop being coy when they get to the Compatibility Risks section of the document:

“Ad networks that currently record an impression every time the ad is loaded instead of every time the user actually sees the ad (e.g. using the visibility API) could see a change in their metrics, mainly because LazyFrames could defer ads that would have otherwise been loaded in but never seen by the user. This could also affect the revenue of site owners that use these ads as well. Note that the distance-from-viewport threshold will be tuned such that a deferred frame will typically be loaded in by the time the user scrolls to it.”

In other words, Chrome is in the process of introducing a new feature that will speed up the web at the expense of both advertisers and publishers.

While there’s a lot of discussion in the document about the requirement of attributes being set for lazy loading to be triggered or not triggered, it sounds as though iframes are lazy loaded by default. And, again, what is to stop Chrome from making the lazy loading functionality the default always? I’ll answer that. Nothing.

“Not Provided” for Everybody and Everything

All of this overzealous privacy madness is setting us up for a world where digital marketing performance becomes opaque. ITP and its ilk, specifically, will kill the majority of the intelligence that is collected beyond the 7-day window. Say farewell to multi-channel attribution and 90-day look-back windows. Marketers will be spending money blind and living solely on last-click models.

The potential loss of multi-channel attribution is especially concerning because many initiatives, such as Content Marketing, continue to be invested in because of the measurable interplay between channels. If you can’t prove that a user visited your site for the first time 90 days ago due to a piece of content, signed up for the mailing list, and then came back and bought something, you’ll wrongfully assume the content isn’t working for you. You’ll simply be left to guess.

Apple and Firefox are effectively helping the digital marketing experience become more like buying billboards on the information superhighway. Chrome is sacrificing performance intelligence for simple performance.

It all feels like “not provided” on a bigger scale.

Cool, so what do we do?

You can see Simo’s posts for the list of known workarounds for ITP 2.1. Be warned though, I suspect that Apple and the platforms will continue to iterate in response to these hacks. As an example, see the ITP 2.2 update from the 24th that tackles tracking through link decoration.

However, all of the issues we’ve discussed are potentially tremendous blows to everything we do to track performance. It all begs a series of questions:

- Do we need to rethink performance intelligence in general?

- Will we become increasingly reliant upon panel-driven solutions like ComScore and Hitwise?

- What role, if any, can log files play to recover some information?

- Will ISPs get in the game in an attempt to give us more of the data back?

- Will portable user identity specifications and solutions gain more adoption?

- Or, will we live and die by the data that the channels give us on their native platforms?

After all, the loss of persistent user tracking just makes the wealth of users always logged in to Facebook and Google that much more valuable.

Review the Problem Segments

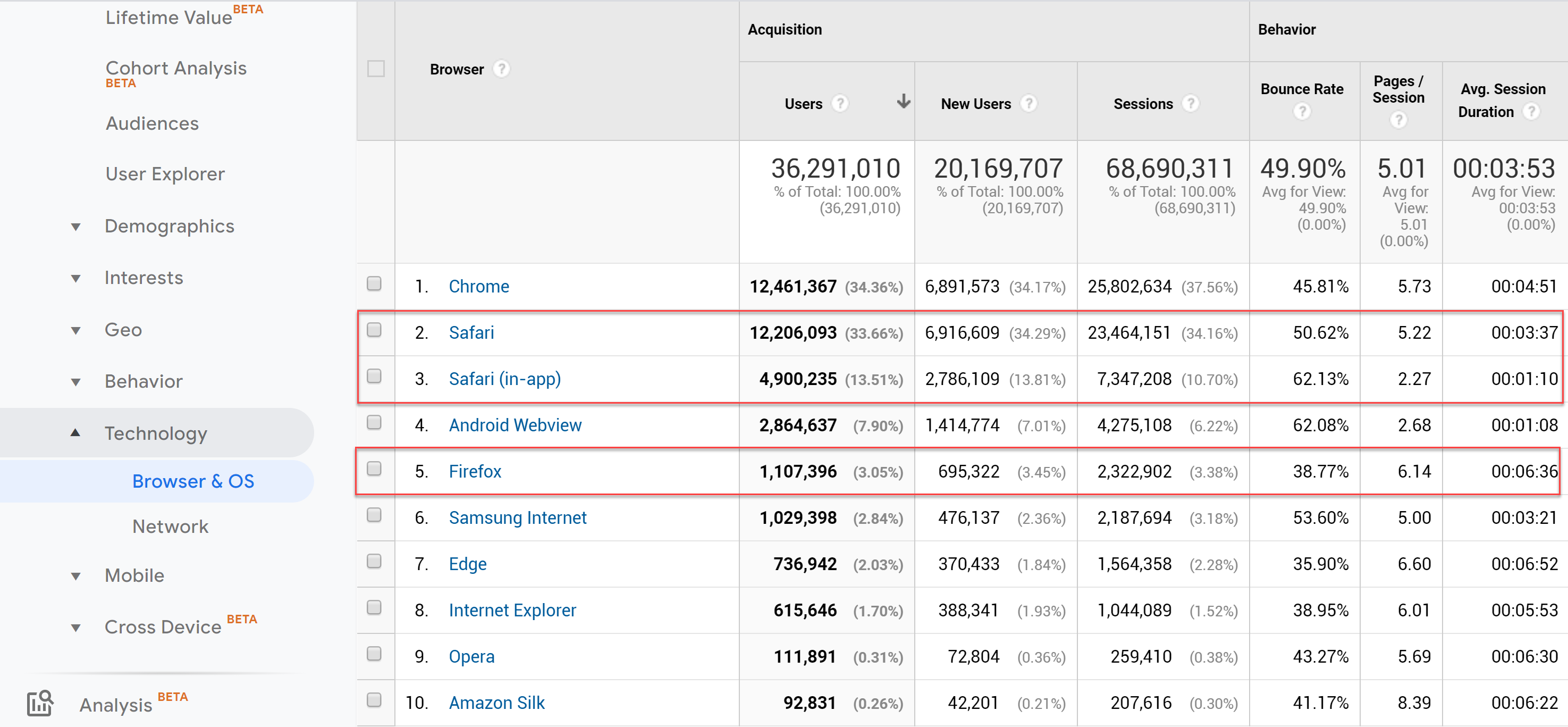

In the meantime, you should review what devices and user agents that your traffic comes from. This is your first step to determining how at-risk your data is. For Google Analytics users, head to Audience > Technology > Browser & OS and review how much of your traffic is Safari and Firefox.

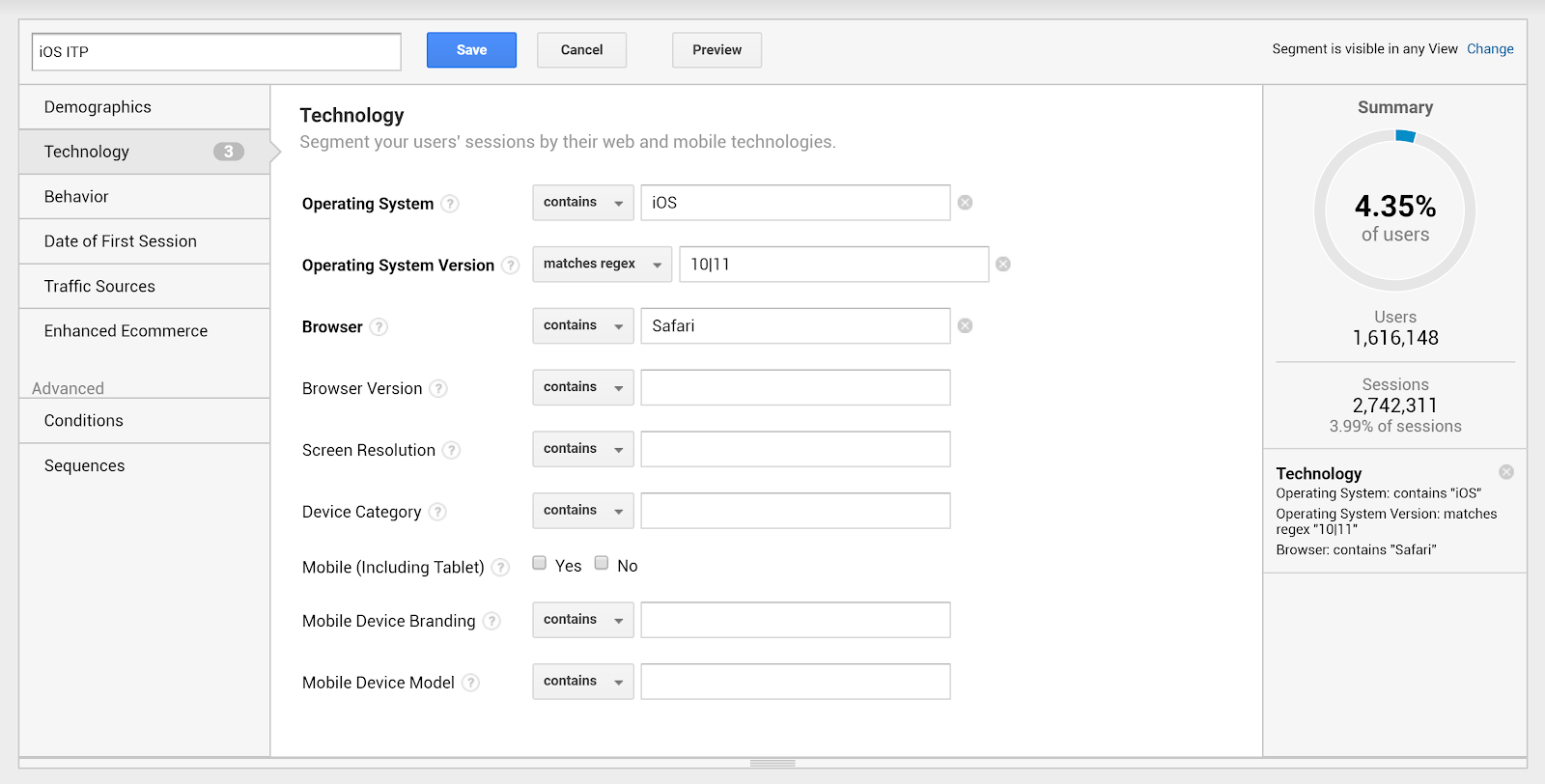

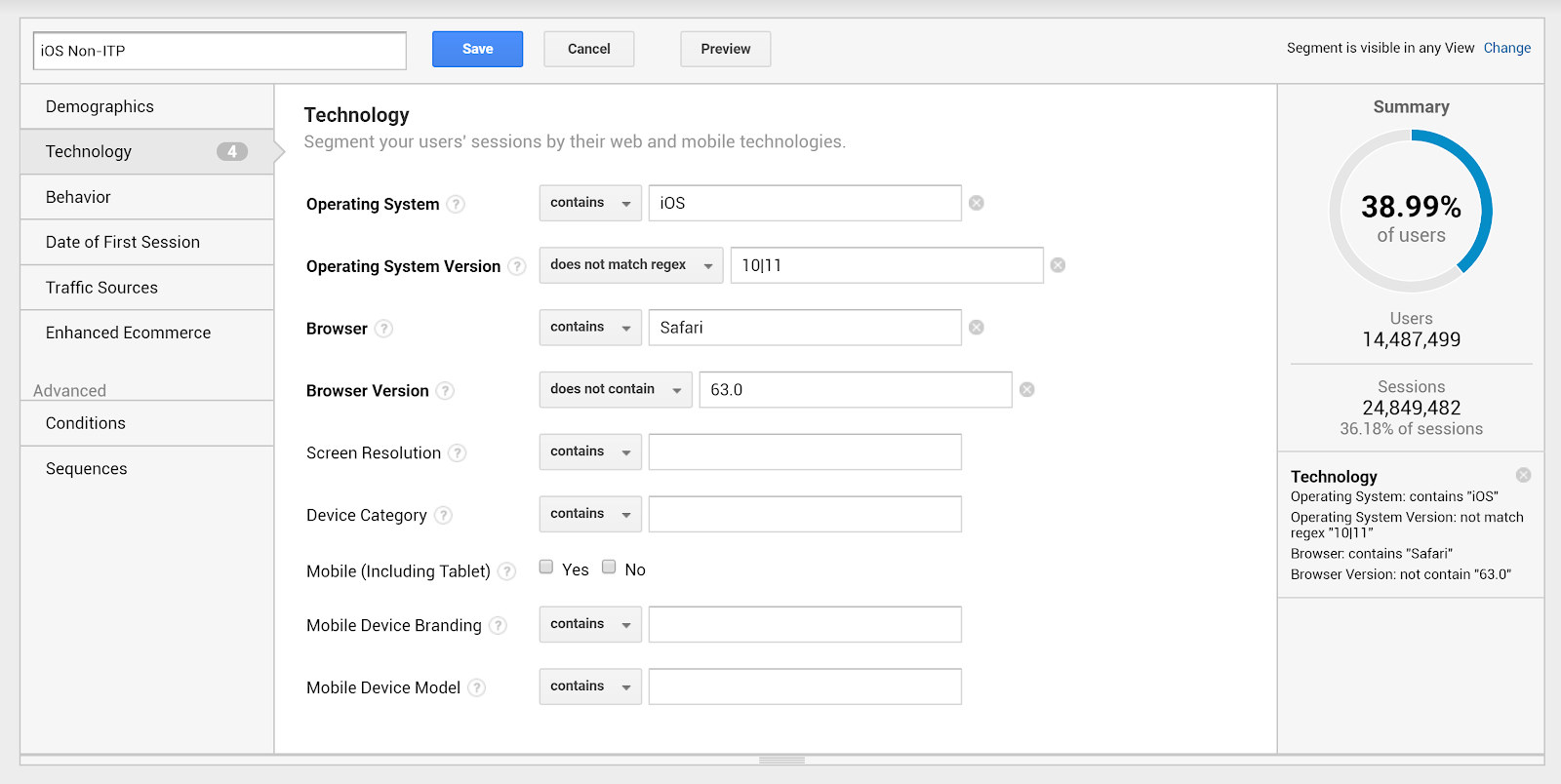

Create segments for these ITP-enablers and keep an eye on the artificial growth of the new user segments. Also, keep an eye on how your multi-channel funnels shift over time. Consider this example wherein I’ve set up segments for iOS with ITP and iOS without:

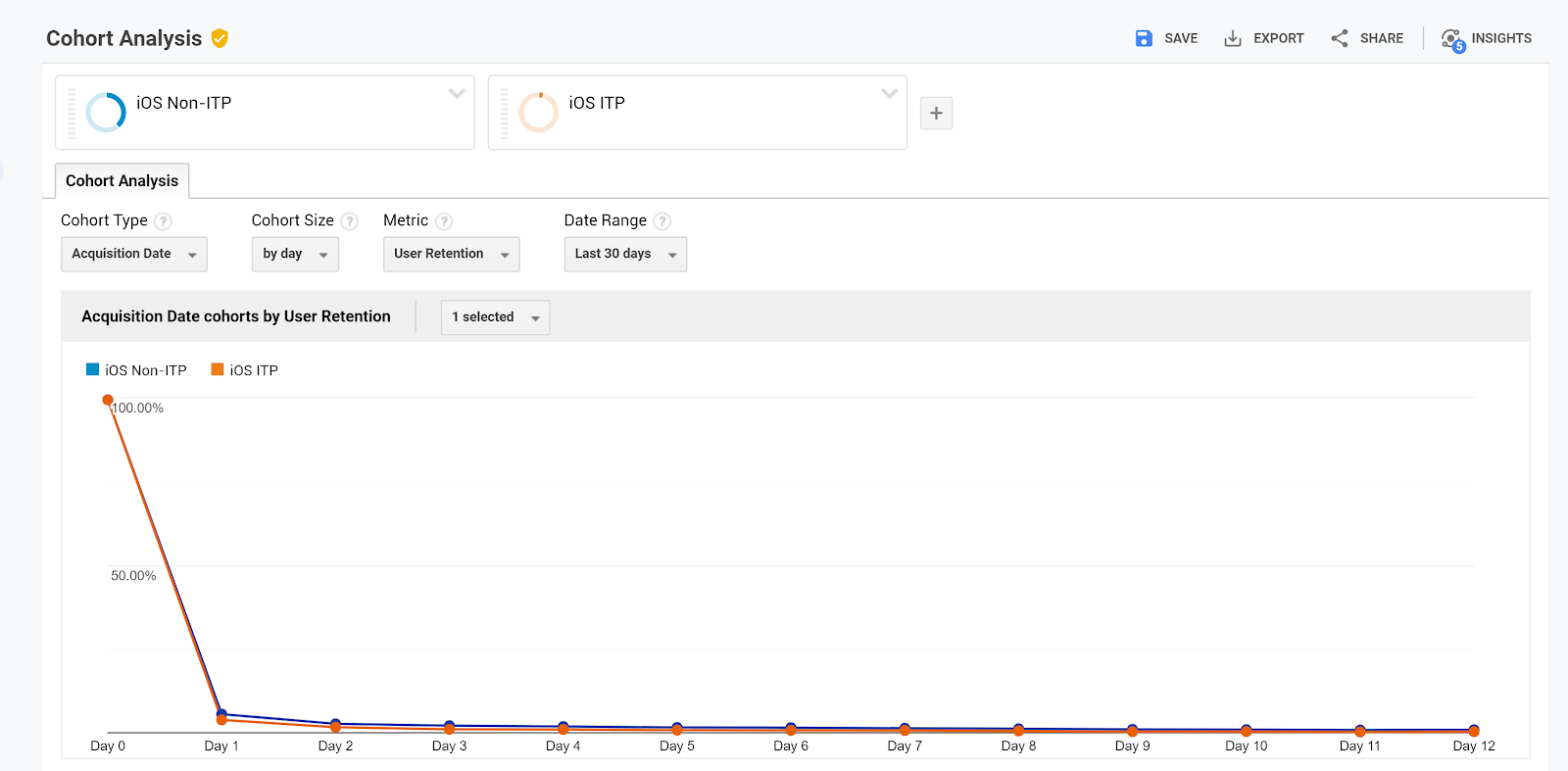

Once you’ve set them up, compare the two segments in the Cohort Analysis section. While it may be the result of a variety of other behavioral factors, thus far I’ve found that ITP may not work completely as intended. For sites that I’ve done these comparisons, I’ve found that the user data does not go completely to zero after day 7. Rather, I see that ITP cohort gets closer to zero a lot faster than non-ITP.

You can do the same for MacOs 10.3 and 10.4 and Firefox 63+.

Annotate Your Analytics for Release Dates

Annotate your analytics with release dates for ITP and stay up to date on the release of Blink LazyLoad. The dates for ITP releases are as follows:

- 2.2 – 4/24/2019

- 2.1 – 2/21/2019

- 2.0 – 6/4/2018

- 1.1 – 3/14/2018

- 1.0 – 6/5/2017

Check out the Chrome release notes to find out and annotate your Display, Retargeting, and Programmatic reporting when Blink LazyLoad is live. Keeping track of these dates may help you identify when you have been struck by data anomalies.

Wrapping Up

For Apple, ITP is a quiet weapon for a silent war for “privacy.” Much like Google’s introduction of not provided, I interpret it as a mechanism for them to throw a wrench into the operations of companies who rely on that data. Nevertheless, it’s just the latest in a series of acts by companies and governments that hampers digital marketing’s ability to understand and target their audiences. There’s no way to prevent the upcoming datapocalypse, but it’s good to be aware of its impact.

For marketers worldwide, the biggest fear should be that digital marketing devolves into an immeasurable mess. While the platforms are hashing out our fate, now is a good time to rethink what you’re collecting as a first party as well as how to get users to log in and stay logged in. If that’s not an option, I guess you can write your Congressman?

Need EXPERT HELP with an industry-leading SEO and CONTENT STRATEGY? CONTACT US!

Now over to you, what issues have you seen with analytics? What’s on the way that concerns you about your data?