For years, video SEO was treated as a distinct discipline, but in the age of AI Search, that separation is outdated. Today, YouTube is not merely a social platform but often the most dominant source of information for LLMs and AI Search engines.

According to data from BrightEdge in October 2025, YouTube was cited 200 times more than any other video platform in AI search results. Competitors like TikTok, Vimeo, and Twitch barely register (each holding roughly 0.1 percent or less of citations), so YouTube is really the only video source that matters for AI engines.

Marketers need to pay attention: If video hasn’t been a part of the marketing strategy before, it definitely should be now. A comprehensive omnimedia content strategy is vital for AI Search.

The scale of YouTube’s current supremacy in search extends beyond just video queries. The platform is often the primary source for general informational queries, as well, even surpassing established authorities:

Next, let’s look at how people are searching these days, and why YouTube keeps showing up so often.

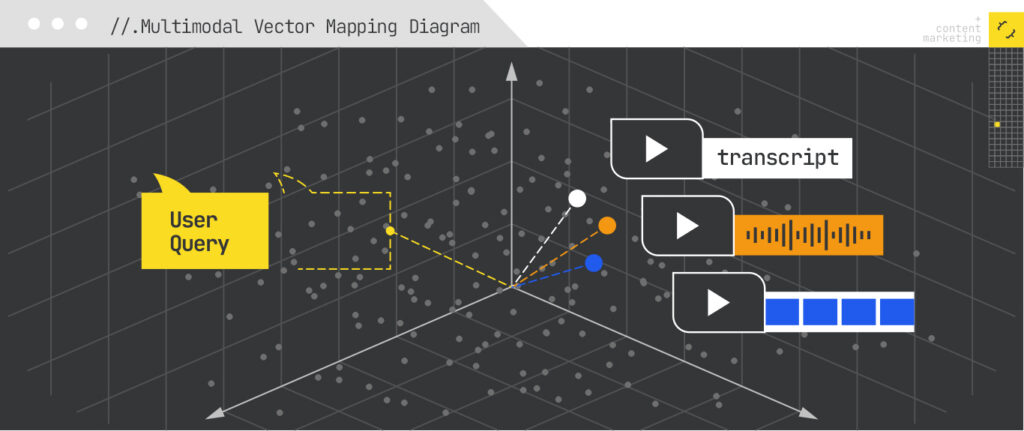

Much like Google itself, YouTube acts as a search engine that relies on vector embeddings, mathematical representations (vectors) of content that represent relevance. AI Overviews and AI Mode both rely on embeddings as well, and again as with Google, these embeddings are multimodal, consisting of video, images, and text.

Google and other engines watch and listen to content by converting video transcripts, images, and audio into mathematical representations in a high-dimensional space. This allows the AI to measure the semantic relevance of the actual spoken content against a user’s query.

The shift fits well with Relevance Engineering, which focuses on multimodal relevance across all surfaces. iPullRank’s 2025 research into over 100,000 videos revealed that the strongest ranking signal is no longer just the title, but the relevance of specific segments within the video transcript to the user’s intent.

Knowing this provides insight into how to optimize video content for better visibility in AI Search.

To get your videos cited in AI Overviews and ranked in AI Mode, you must treat your video scripts with the same SEO rigor you apply to web pages. Here are the essential steps:

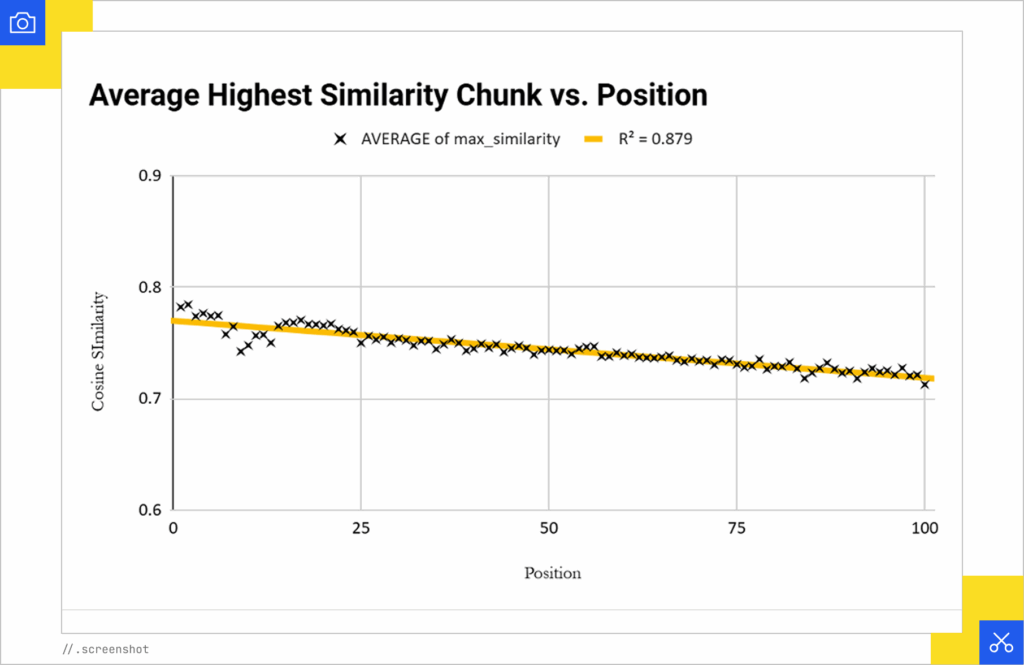

The single strongest predictor of high ranking is the semantic relevance of your transcript’s best segment to the search query.

So you must ensure your script explicitly answers the core question of your target keyword. Our research showed a 0.937 correlation between the relevance of the transcript and ranking position. If the AI cannot find a chunk of text in your transcript that semantically matches the query, the video is unlikely to be cited.

Where you place the answer to the query matters. We found a positive correlation between ranking success and the most relevant segment being located within the first 30 seconds of the video.

The takeaway: Avoid long, meandering intros. Instead, state the problem and the solution clearly at the very beginning of the video, to capture both user attention and algorithmic relevance.

While transcripts are king, traditional metadata still plays a vital role. Title relevance and description relevance remain the second and third strongest signals for visibility.

Therefore, your title and description should use natural language that closely matches the intent of the searcher. This increases the cosine similarity (the mathematical measure of similarity) between the user’s prompt and your content.

AI Search engines still rely on traditional authority signals to determine trust.

Use the Keyword Opposition to Benefit (KOB) metric: Identify keywords for which the median view count is high but the median subscriber count of ranking channels is low. These are gaps where smaller channels can compete.

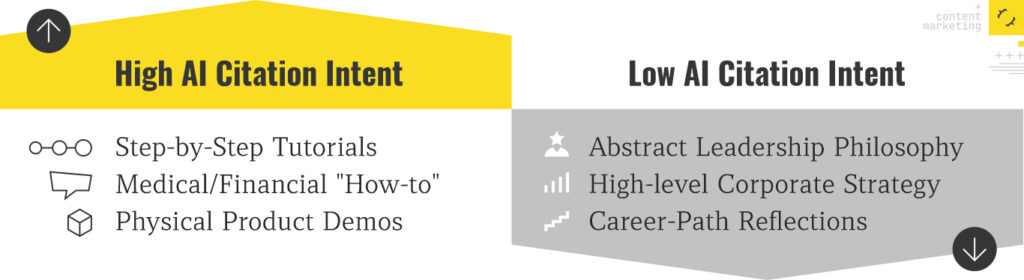

Not all queries trigger video citations. Our data suggests that YouTube citations are most prevalent for specific intents:

This new search behavior and the growing use of LLMs require new metrics to measure the success of video content.

We are now in an omnimedia search environment where the format of the content can vary greatly and may matter less than its semantic value. However, because YouTube is the undisputed database of record for video in AI models, it must be a pillar of every AI optimization strategy.

By focusing on transcript relevance and authoritative delivery, brands can ensure they’re answering the user’s question, whether they are reading text or watching a clip in an AI Overview.

If your brand isn’t being retrieved, synthesized, and cited in AI Overviews, AI Mode, ChatGPT, or Perplexity, you’re missing from the decisions that matter. Relevance Engineering structures content for clarity, optimizes for retrieval, and measures real impact. Content Resonance turns that visibility into lasting connection.

Schedule a call with iPullRank to own the conversations that drive your market.

The appendix includes everything you need to operationalize the ideas in this manual, downloadable tools, reporting templates, and prompt recipes for GEO testing. You’ll also find a glossary that breaks down technical terms and concepts to keep your team aligned. Use this section as your implementation hub.

//.eBook

The AI Search Manual is your operating manual for being seen in the next iteration of Organic Search where answers are generated, not linked.

Prefer to read in chunks? We’ll send the AI Search Manual as an email series—complete with extra commentary, fresh examples, and early access to new tools. Stay sharp and stay ahead, one email at a time.

Sign up for the Rank Report — the weekly iPullRank newsletter. We unpack industry news, updates, and best practices in the world of SEO, content, and generative AI.

iPullRank is a pioneering content marketing and enterprise SEO agency leading the way in Relevance Engineering, Audience-Focused SEO, and Content Strategy. People-first in our approach, we’ve delivered $4B+ in organic search results for our clients.

We’ll break it up and send it straight to your inbox along with all of the great insights, real-world examples, and early access to new tools we’re testing. It’s the easiest way to keep up without blocking off your whole afternoon.